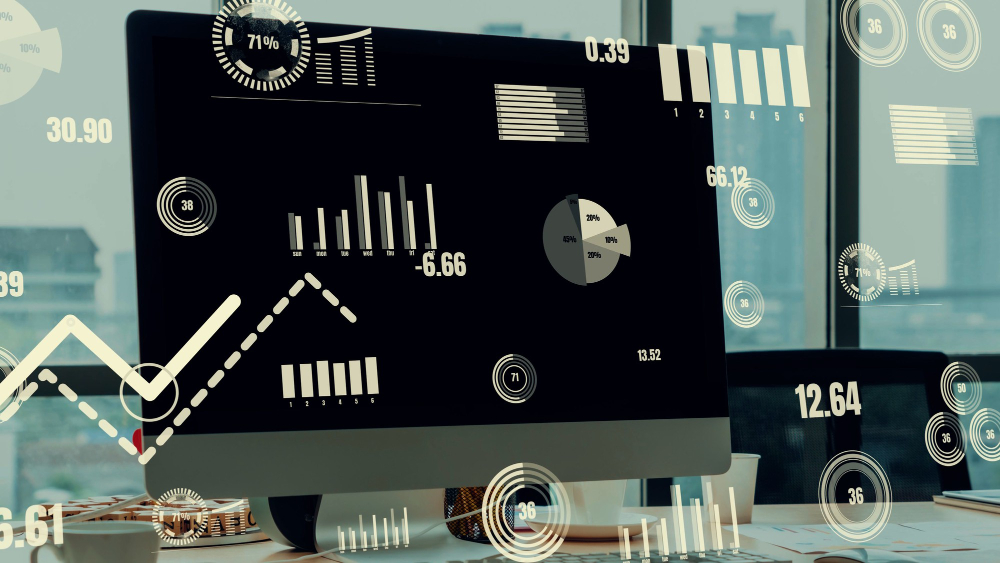

Big data analysis is an advanced process used to derive meaningful insights from massive and complex datasets where traditional methods fall short.

These datasets include diverse data from sources such as communication, sensor outputs, customer interactions, and more. In this context, our goal is to extract strategic insights from rapidly growing and diversifying data to optimize business processes.

Volume

Big data involves datasets of petabytes or larger. We utilize high-capacity infrastructures to collect and process these vast amounts of data.

Velocity

Big data is generated at high speeds and requires rapid analysis. We enable high-speed processing of data streams from sources such as social media feeds and real-time sensor data.

Variety

Big data comprises structured, semi-structured, and unstructured data types. To analyze this diversity, we employ flexible and powerful analytical tools.

Veracity

To ensure the accuracy of information within big data, we implement advanced validation and cleansing techniques, minimizing the impact of misleading or erroneous data.

BIG DATA ANALYSIS PROCESS

Using infrastructures such as Hadoop and Apache Kafka, we follow the algorithm outlined below after data collection:

Data Storage

We utilize modern data storage solutions like NoSQL databases (e.g., MongoDB, Cassandra) to store unstructured and semi-structured data.

Data Processing

With distributed processing engines such as Spark and Flink, we process large datasets quickly and in parallel, preparing them for analysis.

Data Analysis

Using machine learning, natural language processing, and predictive algorithms, we extract meaningful insights from large datasets. This step allows us to identify critical patterns and trends within the data.